The post RDF2NL: Generating Texts from RDF Data appeared first on DBpedia Association.

]]>Hi DBpedians,

During the DBpedia Day in Leipzig, I gave a talk about how to use the facts contained in the DBpedia Knowledge Graph for generating coherent sentences and texts.

We essentially rely on Natural Language Generation (NLG) techniques for accomplishing this task. NLG is the process of generating coherent natural language text from non-linguistic data (Reiter and Dale, 2000). Despite community agreement on the actual text and speech output of these systems, there is far less consensus on what the input should be (Gatt and Krahmer, 2017). A large number of inputs have been taken for NLG systems, including images (Xu et al., 2015), numeric data (Gkatzia et al., 2014), semantic representations (Theune et al., 2001).

Why not generate text from Knowledge graphs?

The generation of natural language from the Semantic Web has been already introduced some years ago (Ngonga Ngomo et al., 2013; Bouayad-Agha et al., 2014; Staykova, 2014). However, it has gained recently substantial attention and some challenges have been proposed to investigate the quality of automatically generated texts from RDF (Colin et al., 2016). Moreover, RDF has demonstrated a promising ability to support the creation of NLG benchmarks (Gardent et al., 2017). Still, English is the only language which has been widely targeted. Thus, we proposed RDF2NL which can generate texts in other languages than English by relying on different language versions of SimpleNLG.

What is RDF2NL?

While the exciting avenue of using deep learning techniques in NLG approaches (Gatt and Krahmer, 2017) is open to this task and deep learning has already shown promising results for RDF data (Sleimi and Gardent, 2016), the morphological richness of some languages led us to develop a rule-based approach. This was to ensure that we could identify the challenges imposed by each language from the SW perspective before applying Machine Learning (ML) algorithms. RDF2NL is able to generate either a single sentence or a summary of a given resource. RDF2NL is based on Ngonga Ngomo et.al LD2NL and it also uses the Brazilian, Spanish, French, German and Italian adaptations of SimpleNLG to the realization task.

An example of RDF2NL application:

We envisioned a promising application by using RDF2PT which aims to support the automatic creation of benchmarking datasets to Named Entity Recognition (NER) and Entity Linking (EL) tasks. In Brazilian Portuguese, there is a lack of gold standards datasets for these tasks, which makes the investigation of these problems difficult for the scientific community. Our aim was to create Brazilian Portuguese silver standard datasets which are able to be uploaded into GERBIL for easy evaluation. To this end, we implemented RDF2PT ( Portuguese version of RDF2NL) in BENGAL , which is an approach for automatically generating NER benchmarks based on RDF triples and Knowledge Graphs. This application has already resulted in promising datasets which we have used to investigate the capability of multilingual entity linking systems for recognizing and disambiguating entities in Brazilian Portuguese texts. Some results you can find below:

NER – http://gerbil.aksw.org/gerbil/experiment?id=201801050043

NED – http://gerbil.aksw.org/gerbil/experiment?id=201801110012

More application scenarios

- Summarize or Explain KBs to non-experts

- Create news automatically (automated journalism)

- Summarize medical records

- Generate technical manuals

- Support the training of other NLP tasks

- Generate product descriptions (Ebay)

Deep Learning into RDF2NL

After devising our rule-based approach, we realized that RD2NL is really good by selecting adequate content from the RDF triples, but the fluency of its generated texts remains a challenge. Therefore, we decided to move forward and work with neural network models to improve the fluency of texts as they have already shown promising results in the generation of translations. Thus, we focused on the generation of referring expressions, which is an essential part while generating texts, it basically decides how the NLG model will present the information about a given entity. For example, the referring expressions of the entity Barack Obama can be “the former president of USA”, “Obama”, “Barack”, “He” and so on. Afterward, we have been working on combining different NLG sub-tasks into single neural models for improving the fluency of our texts.

GSoC on it – Stay tuned!

Apart from trying to improve the fluency of our models, we relied previously on different language versions of SimpleNLG to the realization task. Nowadays, we have been investigating the generation of multiple languages by using a unique neural model. Our student has been working hard to provide nice results and we are basically at the end of our GSoC project. So stay tuned to know the outcome of this exciting project.

Many thanks to Diego for his contribution. If you want to write a guest post, share your results on the DBpedia Blog, and thus give your work more visibility and outreach, just ping us via dbpedia@infai.org.

Yours

DBpedia Association

The post RDF2NL: Generating Texts from RDF Data appeared first on DBpedia Association.

]]>The post A year with DBpedia – Retrospective Part Two appeared first on DBpedia Association.

]]>Let the travels begin.

Welcome to Thessaloniki, Greece & ESWC

DBpedians from the Portuguese Chapter presented their research results during ESWC 2018 in Thessaloniki, Greece. the team around Diego Moussalem developed a demo to extend MAG to support Entity Linking in 40 different languages. A special focus was put on low-resources languages such as Ukrainian, Greek, Hungarian, Croatian, Portuguese, Japanese and Korean. The demo relies on online web services which allow for an easy access to (their) entity linking approaches. Furthermore, it can disambiguate against DBpedia and Wikidata. Currently, MAG is used in diverse projects and has been used largely by the Semantic Web community. Check the demo via http://bit.ly/2RWgQ2M. Further information about the development can be found in a research paper, available here.

Currently, MAG is used in diverse projects and has been used largely by the Semantic Web community. Check the demo via http://bit.ly/2RWgQ2M. Further information about the development can be found in a research paper, available here.

Welcome back to Leipzig Germany

With our new credo “connecting data is about linking people and organizations”, halfway through 2018, we finalized our concept of the DBpedia Databus. This global DBpedia platform aims at sharing the efforts of OKG governance, collaboration, and curation to maximize societal value and develop a linked data economy.

With this new strategy, we wanted to meet some DBpedia enthusiasts of the German DBpedia Community. Fortunately, the LSWT (Leipzig Semantic Web Tag) 2018 hosted in Leipzig, home to the DBpedia Association proofed to be the right opportunity. It was the perfect platform to exchange with researchers, industry and other organizations about current developments and future application of the DBpedia Databus. Apart from hosting a hands-on DBpedia workshop for newbies we also organized a well-received WebID -Tutorial. Finally, the event gave us the opportunity to position the new DBpedia Databus as a global open knowledge network that aims at providing unified and global access to knowledge (graphs).

Welcome down under – Melbourne Australia

Further research results that rely on DBpedia were presented during ACL2018, in Melbourne, Australia, July 15th to 20th, 2018. The core of the research was DBpedia data, based on the WebNLG corpus, a challenge where participants automatically converted non-linguistic data from the Semantic Web into a textual format. Later on, the data was used to train a neural network model for generating referring expressions of a given entity. For example, if Jane Doe is a person’s official name, the referring expression of that person would be “Jane”, “Ms Doe”, “J. Doe”, or “the blonde woman from the USA” etc.

Further research results that rely on DBpedia were presented during ACL2018, in Melbourne, Australia, July 15th to 20th, 2018. The core of the research was DBpedia data, based on the WebNLG corpus, a challenge where participants automatically converted non-linguistic data from the Semantic Web into a textual format. Later on, the data was used to train a neural network model for generating referring expressions of a given entity. For example, if Jane Doe is a person’s official name, the referring expression of that person would be “Jane”, “Ms Doe”, “J. Doe”, or “the blonde woman from the USA” etc.

If you want to dig deeper but missed ACL this year, the paper is available here.

Welcome to Lyon, France

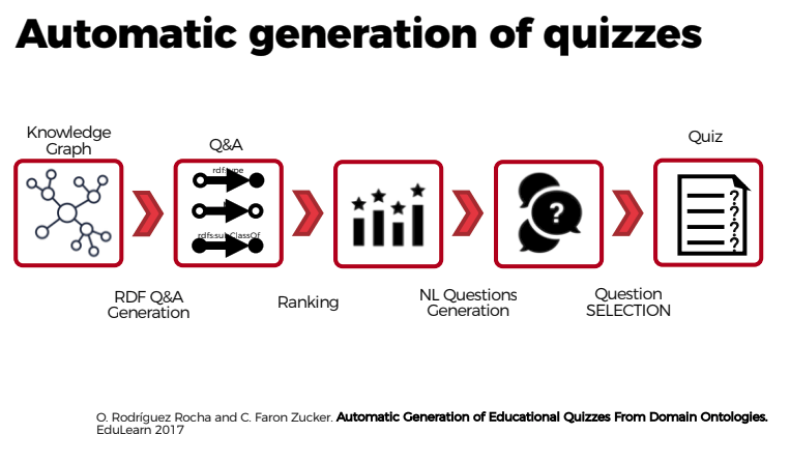

In July the DBpedia Association travelled to France. With the organizational support of Thomas Riechert (HTWK, InfAI) and Inria, we finally met the French DBpedia Community in person and presented the DBpedia Databus. Additionally, we got to meet the French DBpedia Chapter, researchers and developers around Oscar Rodríguez Rocha and Catherine Faron Zucker. They presented current research results revolving around an approach to automate the generation of educational quizzes from DBpedia. They wanted to provide a useful tool to be applied in the French educational system, that:

- helps to test and evaluate the knowledge acquired by learners and…

- supports lifelong learning on various topics or subjects.

The French DBpedia team followed a 4-step approach:

- Quizzes are first formalized with Semantic Web standards: questions are represented as SPARQL queries and answers as RDF graphs.

- Natural language questions, answers and distractors are generated from this formalization.

- We defined different strategies to extract multiple choice questions, correct answers and distractors from DBpedia.

- We defined a measure of the information content of the elements of an ontology, and of the set of questions contained in a quiz.

Oscar R. Rocha and Catherine F. Zucker also published a paper explaining the detailed approach to automatically generate quizzes from DBpedia according to official French educational standards.

Oscar R. Rocha and Catherine F. Zucker also published a paper explaining the detailed approach to automatically generate quizzes from DBpedia according to official French educational standards.

Thank you to all DBpedia enthusiasts that we met during our journey. A big thanks to

With this journey from Europe to Australia and back we provided you with insights into research based on DBpedia as well as a glimpse into the French DBpedia Chapter. In our final part of the journey coming up next week, we will take you to Vienna, San Francisco and London. In the meantime, stay tuned and visit our Twitter channel or subscribe to our DBpedia Newsletter.

Have a great week.

Yours DBpedia Association

The post A year with DBpedia – Retrospective Part Two appeared first on DBpedia Association.

]]>